Imperial College Antibody Study Debunks Itself

Per authors: people who had been exposed to the virus were less likely to take part over time, which contributed to apparent population antibody waning.

An Imperial College study on SARS-CoV-2 antibodies made headlines last week, as BBC and other outlets incited fears about coronavirus immunity waning “quite rapidly” after infection.

When reading the following example, bear in mind that the functioning of the human bloodstream would be impaired after illness if antibody levels never waned.

Yesterday, a team from Imperial College London found the number of people testing positive for antibodies has fallen by 26% between June and September. They say immunity appears to be fading and that there is some risk of catching the virus more than once. It is hard not to be overrun by fear.

Despite the study appearing to show very normal clinical signs of antibody waning, it was widely seen through the media’s panic-stricken lens as proof that meaningful SARS-CoV-2 immunity cannot be conferred.

Many commentators (including this group of Israeli scientists) have pointed out that antibodies always decrease over time and that there are other indicators of lasting immunity to a given virus not captured by the study, such as T cell response.

The data themselves provide no evidence that antibodies do not persist over the timescale necessary to aid in the process of reaching herd immunity. A recent publication in Science adds to this interpretation.

But here we should focus on a different challenge: the basic methodology.

The Imperial College authors are not responsible for how their work is slotted into the coronavirus culture wars. They are, however, responsible for the work itself. And a quick look at the study design reveals that this paper does not establish cause & effect. In fact, the authors tell us so:

Our study has limitations. It included non-overlapping random samples of the population, but it is possible that people who had been exposed to the virus were less likely to take part over time, which may have contributed to apparent population antibody waning.

Why the “but?” Let’s extract the core message:

People who had been exposed to the virus were less likely to take part over time, which contributed to apparent population antibody waning.

The common sense way to measure variability in antibody response would be a longitudinal study. Inherently, that requires data be drawn from the same individuals, tracked over time at different points in the epidemic. Investigators would simply anonymize by assigning a patient ID to the first group and stick with them.

The Imperial antibody study looks at test results from different “random samples” of the population at different points of the UK epidemic instead. Worse, the random sample isn’t very random: it selects for those who would fill out a 35 page information sheet self-reporting Lateral Flow ImunoAssay home test kit results.

What follows from this difficult setup is the impromptu development of a brand new study design, likely published because someone spent money on all these tests. We can call it the Imperial Method.

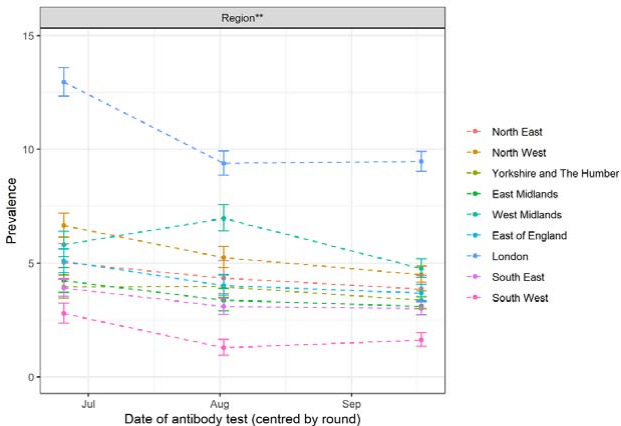

To demonstrate just how innovative this is: the Imperial Method allows it to look as though antibodies rise in people who are from the right place. Has Imperial discovered a brand new form of viral immunity? See Figure 2. It’s subtle, but apparently the South West UK strain of SARS-CoV-2 is quite novel.

Most results in Figure 2, of course, flatline after August. We see an initial decline, and then prevalence plateaus. So, to reiterate, if we take this study at face value it’s showing a reassuring antibody response. Apparently there’s no drop-off of antibody response after the first month. Good news for vaccine development and our long-term prospects when it comes to herd immunity.

Figure 3 manages to compensate somewhat for the increase in antibody prevalence in areas by fitting a line to the local authority results. And yet, seroprevalence goes up for some local authorities no matter how many lines authors fit on there.

Figure 4 is an epidemic curve with an arrow labeled “lockdown” to make Neil Ferguson happy. It doesn’t have much to do with the study.

So — we have a strange study, a sensationalist press response, and the authors are defensive of (or warning us about) the study design within their own conclusion. The work ends with an obtuse table formatted in a manner that no academic is likely to reanalyze it without a research assistant.

…How much did this study cost the British public?

Further Analysis

I spoke with an academic who is a fellow member of Pandemics - Data & Analytics (PANDA) on various issues with the study. See below for a summary of his thoughts.

Summary of Methodological Issues:

The study is not longitudinal. I.e. the same people were not tested in each phase. Any sensible study design would have followed up on the first tests and tracked the cohort. However this could have led to an awkward situation if some test result sequences were positive, negative, positive.

Claims to be based on a random sample when in fact the sample is mainly self selecting. This is because the process of signing up for the study is so onerous that very few people would be motivated to jump through all the hoops needed to participate.

Different sized samples at different phases. The sample size increases over time. This can be held for statistically, but it suggests that something else has been changing.

Effect of non-discrete sampling periods not fully accounted for. Non-return of test results not discussed nor explained. Across all three rounds, 37.7% of those invited registered, and 29.9% provided valid IgG positive or negative which implies that 20% of all tests sent out were not returned. Why were the tests not returned? Were they positive tests that people were worried about submitting?

The study misleadingly implies a direct link between prevalence of positive test results in the population with individual level immunity. The results have to be interpreted at the level of the study.

Subgroup analysis is not discussed in depth. Heterogeneity between the group responses deserves much more attention and requires explanation.

The characteristics of the test mean that results are not appropriate for clinical use in individuals and participants are advised not to change their behavior based on the result. The implications of this statement are not made explicit with respect to the test reliability.

Statements such as “increasing risk of reinfection as detectable antibodies decline in the population” are not justified by the results of this study.

Specificity and sensitivity of the test not measured under study conditions.

The paper provides very limited evidence of waning protection through antibodies or any other immune response. The paper can only be interpreted in a very narrow technical sense as providing estimates of the prevalence of positive results from Lateral Flow ImunoAssay home test kits, during three rounds of a serial cross-sectional study of adults in England, UK carried out between June and September 2020 on a self selecting non-random sample of adults.

Overall, “the paper raises serious issues regarding the underlying ethics of scientific publishing in the pandemic period.”